Extensions for Microsoft.Extensions.AI

Full support for Grok Live Search and Reasoning model options.

// Sample X.AI client usage with .NET

var messages = new Chat()

{

{ "system", "You are a highly intelligent AI assistant." },

{ "user", "What is 101*3?" },

};

var grok = new GrokChatClient(Env.Get("XAI_API_KEY")!, "grok-3-mini");

var options = new GrokChatOptions

{

ModelId = "grok-3-mini-fast", // 👈 can override the model on the client

Temperature = 0.7f,

ReasoningEffort = ReasoningEffort.High, // 👈 or Low

Search = GrokSearch.Auto, // 👈 or On/Off

};

var response = await grok.GetResponseAsync(messages, options);Tip

Env is a helper class from Smith,

a higher level package typically used for dotnet run program.cs scenarios in

.NET 10. You will typically use IConfiguration for reading API keys.

This package does not depend on Smith.

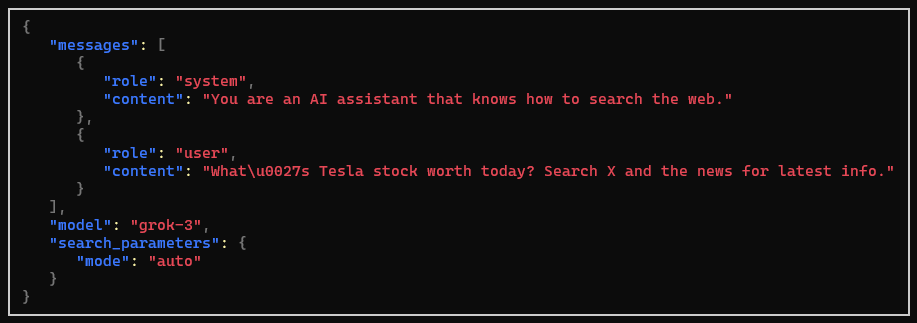

Search can alternatively be configured using a regular ChatOptions

and adding the HostedWebSearchTool to the tools collection, which

sets the live search mode to auto like above:

var messages = new Chat()

{

{ "system", "You are an AI assistant that knows how to search the web." },

{ "user", "What's Tesla stock worth today? Search X and the news for latest info." },

};

var grok = new GrokChatClient(Env.Get("XAI_API_KEY")!, "grok-3");

var options = new ChatOptions

{

Tools = [new HostedWebSearchTool()] // 👈 equals setting GrokSearch.Auto

};

var response = await grok.GetResponseAsync(messages, options);The support for OpenAI chat clients provided in Microsoft.Extensions.AI.OpenAI fall short in some scenarios:

- Specifying per-chat model identifier: the OpenAI client options only allow setting

a single model identifier for all requests, at the time the

OpenAIClient.GetChatClientis invoked. - Setting reasoning effort: the Microsoft.Extensions.AI API does not expose a way to set reasoning

effort for reasoning-capable models, which is very useful for some models like

o4-mini.

So solve both issues, this package provides an OpenAIChatClient that wraps the underlying

OpenAIClient and allows setting the model identifier and reasoning effort per request, just

like the above Grok examples showed:

var messages = new Chat()

{

{ "system", "You are a highly intelligent AI assistant." },

{ "user", "What is 101*3?" },

};

IChatClient chat = new OpenAIChatClient(Env.Get("OPENAI_API_KEY")!, "o3-mini");

var options = new ChatOptions

{

ModelId = "o4-mini", // 👈 can override the model on the client

ReasoningEffort = ReasoningEffort.High, // 👈 or Medium/Low, extension property

};

var response = await chat.GetResponseAsync(messages, options);The underlying HTTP pipeline provided by the Azure SDK allows setting up policies that can observe requests and responses. This is useful for monitoring the requests and responses sent to the AI service, regardless of the chat pipeline configuration used.

This is added to the OpenAIClientOptions (or more properly, any

ClientPipelineOptions-derived options) using the Observe method:

var openai = new OpenAIClient(

Env.Get("OPENAI_API_KEY")!,

new OpenAIClientOptions().Observe(

onRequest: request => Console.WriteLine($"Request: {request}"),

onResponse: response => Console.WriteLine($"Response: {response}"),

));You can for example trivially collect both requests and responses for payload analysis in tests as follows:

var requests = new List<JsonNode>();

var responses = new List<JsonNode>();

var openai = new OpenAIClient(

Env.Get("OPENAI_API_KEY")!,

new OpenAIClientOptions().Observe(requests.Add, responses.Add));We also provide a shorthand factory method that creates the options and observes is in a single call:

var requests = new List<JsonNode>();

var responses = new List<JsonNode>();

var openai = new OpenAIClient(

Env.Get("OPENAI_API_KEY")!,

OpenAIClientOptions.Observable(requests.Add, responses.Add));Given the following tool:

MyResult RunTool(string name, string description, string content) { ... }You can use the ToolFactory and FindCall<MyResult> extension method to

locate the function invocation, its outcome and the typed result for inspection:

AIFunction tool = ToolFactory.Create(RunTool);

var options = new ChatOptions

{

ToolMode = ChatToolMode.RequireSpecific(tool.Name), // 👈 forces the tool to be used

Tools = [tool]

};

var response = await client.GetResponseAsync(chat, options);

var result = response.FindCalls<MyResult>(tool).FirstOrDefault();

if (result != null)

{

// Successful tool call

Console.WriteLine($"Args: '{result.Call.Arguments.Count}'");

MyResult typed = result.Result;

}

else

{

Console.WriteLine("Tool call not found in response.");

}If the typed result is not found, you can also inspect the raw outcomes by finding

untyped calls to the tool and checking their Outcome.Exception property:

var result = response.FindCalls(tool).FirstOrDefault();

if (result.Outcome.Exception is not null)

{

Console.WriteLine($"Tool call failed: {result.Outcome.Exception.Message}");

}

else

{

Console.WriteLine($"Tool call succeeded: {result.Outcome.Result}");

}Additional UseJsonConsoleLogging extension for rich JSON-formatted console logging of AI requests

are provided at two levels:

- Chat pipeline: similar to

UseLogging. - HTTP pipeline: lowest possible layer before the request is sent to the AI service,

can capture all requests and responses. Can also be used with other Azure SDK-based

clients that leverage

ClientPipelineOptions.

Note

Rich JSON formatting is provided by Spectre.Console

The HTTP pipeline logging can be enabled by calling UseJsonConsoleLogging on the

client options passed to the client constructor:

var openai = new OpenAIClient(

Env.Get("OPENAI_API_KEY")!,

new OpenAIClientOptions().UseJsonConsoleLogging());For a Grok client with search-enabled, a request would look like the following:

Both alternatives receive an optional JsonConsoleOptions instance to configure

the output, including truncating or wrapping long messages, setting panel style,

and more.

The chat pipeline logging is added similar to other pipeline extensions:

IChatClient client = new GrokChatClient(Env.Get("XAI_API_KEY")!, "grok-3-mini")

.AsBuilder()

.UseOpenTelemetry()

// other extensions...

.UseJsonConsoleLogging(new JsonConsoleOptions()

{

// Formatting options...

Border = BoxBorder.None,

WrapLength = 80,

})

.Build();